#Opendata: notes on the way to (II)

In a past entry I porpose to consider a dataset as something similar to software code. This time I wanna go one step forward talking about free visualization.

Opendata means you can access to a dataset and use it. What you can do with the data depends on the license that the publisher decides. I could’t find nothing serious in this direction (feedback welcome in comments).

The recipe

If I tell you about a nice recipe, usually I tell you about the ingredients and about the process. If I just tell you about the ingredients, you are not able to reproduce my recipe. Also you cannot try to improve it just introduce some changes. When we talk about reproductibility is necessary to know the process,

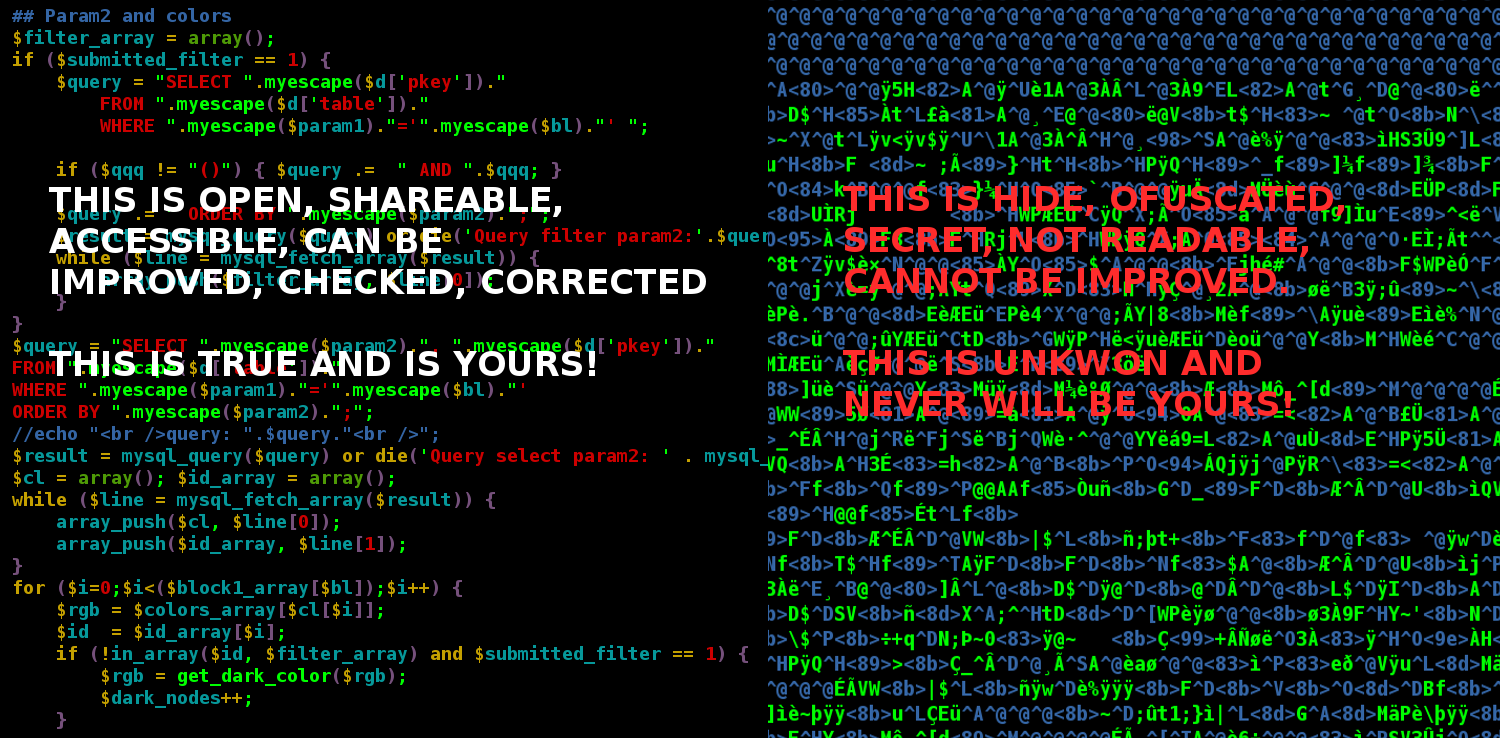

In the digital technology the process is the code of the software who takes the data, does some calculations and arrangements and produces an output. Whether you don’t know how I process the data, nobody can say how good my process is; nobody can correct it, improve it, introduce changes, propose new ideas using the same tool. Others just can see it and talk about it.

Last two years there was a lot of conferences, workshops, hackatons, congresses, etc involving opendata. And a lot of new companies are trying to get into the party. The problem here is that we are focusing on produce nice visualizations as an end point of the dataset. We need to understand that we are just in the beginning of the data visualization age. We are just learning how to use data, how to represent it and how to take decisions with the help of visual analysis.

I undestand that some companies goes for a non free software business model, but when we talk about open data, or even better: public data, then the standarts must be free software or at least give access to the code, pure open sourced applications.

No more public money going to produce non reusable magic apps with our data!